Intro

This is yet another tale of things in the IT world often do not turn out the way it seems at first blush. Or possibly a tale of just when you think you’ve seen it all after decades in the industry, something new (to you) occurs.

What’s going on

The firewall team was all busy so when this strange problem occurred Friday they called in the second string: me. I consider some of the team to be less-than-customer focused so I try to compensate for them and for my lack of knowledge about the firewall by applying a more customer-first attitude. In other words, a sympathetic listening ear. These days it can be hard just to find someone to complain to about your It problem, and I am keenly aware of that.

There was some strange communication which wasn’t working, mediated by a firewall I had never accessed and was not sure i even had access to. So of course I was asked to join a big conference call where an ongoing debugging session was taking place.

I refused.

I hate being blindsided, and i hate not having answers, making me sound even less competent than I already am.

But what I did do is being my research to see what the system is, if perhaps I had access, etc.

Yes. I found that through a management system I have access to I had access to view the policies on that particular firewall and view the logs as well.

So once I had that up, I agreed to join the call.

They had one server communicating to three different systems. Only one of the three systems was being reached. Yes the other two were on the same subnet. Two of our firewalls were between the system and the three servers.

And, yes, i could see some drops. The interesting TCP error stated: TCP packet out of state, first packet isn’t SYN.

No problem. routing must be screwed up such that we have asymmetric routing. It happens all the time. Right? well these systems are really appliances with only some basic networking information configurable, not real debugging facility, and really no ability to add a host route.

I could not establish a shell session onto the firewall – not sure what the password naming scheme was that they used.

Then a real firewall guy comes on the call. But his connectivity is messed up, so I keep with the debug session, if nothing else than to support him since four eyes is more effective than just two. He shares the routing tables of our two in-line firewalls. It’s hard to understand as these are all new subnets for me, some are ones that don’t look right. But just focusing on possible host routes for any of these three servers, I don’t see anything amiss.

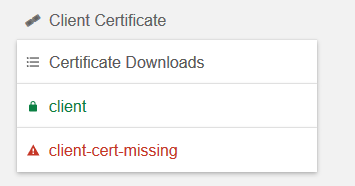

Firewall policy

And, in firewall policy I see the entire subnet has this traffic permitted. There is no rule specific to one or the other of these systems.

So what do we have up until now?

A purist firewall administrator attitude would be as follows:

The firewall treats all these systems the same, therefore this cannot be a firewall problem. Talk to your networking or system people. Have a nice day.

Well, in fact there was some serious question about the network switch as well. So we had a network guy on the call. So they dug up the MAC addresses of these systems, from which they found the switch ports. Then they checked the port configuration. Ah, some complex 802.1x authentication was configured. As I understand this means the device would not even be allowed onto the subnet until it passed some kind of Radius authentication. So they removed this 802.1x stuff and just made sure that port was assigned to the right vlan.

Still, the problem persisted.

I think the other firewall guy was also new to this equipment. Eventually, though, he tries to do a packet trace of the one that’s working versus the one that isn’t.

You know, I never saw the results of those traces, but I’m pretty sure, reading between the lines, that they surprised him, meaning, they did not fit the hypothesis of the asymmetric routing.

In these situations there is the main communication in the mian session, then side communications going on, like between me and the firewall guy. But it is all chaotic. Acoustics are mediocre, accents are hard to understand. So the net transfer of information is pretty low. Statements, even important ones, often have to be repeated multiple times (rebroadcasts) to assure everyone “gets it.”

Typical questions were asked. When did this last work? what had changed? There were a couple changes. Some kind of networking thing (I forget what), and then the firewalls changed management systems after that. The firewall change seemed closer in time to the last known success.

You acquire more and more information as you dig into problems. It’s hard to judge which is relevant at the time and which lines if inquiry are a complete waste of time. A good incident manager or project manager can sense which are the more productive lines of investigation and nurture those discussions while suppressing the noise.

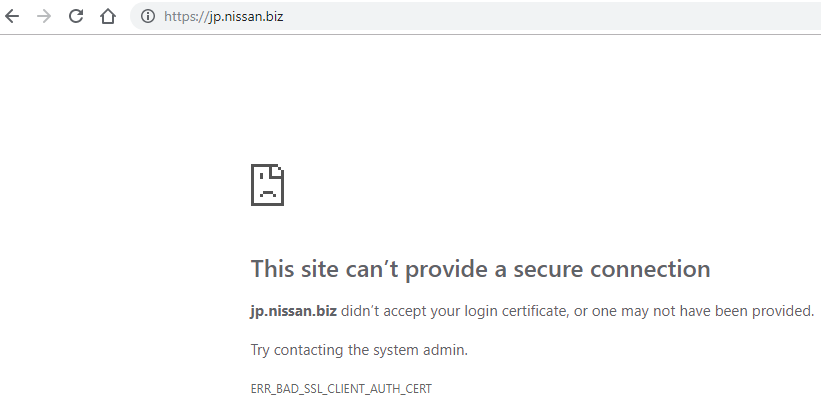

Actually it was the networking guy who found the Checkpoint link below. I looked at it. the firewall guy was muttering something about badly behaved, older applications that might exhibit this behaviour.

So we agreed to take the suggested steps, which would basically allow these out of state packets. Drat. The firewall returned an error.

But I continue to refresh the firewall logs. The communication was occurring about every minute. Lo and behold, I see the older drops, and then accepts for the last few minutes! I think it worked. I tell them to check.

They check their end. Sure enough. Communication beginning to work…

The customer tries to make assertion that this was a firewall problem all along. Not so fast. Firewall guy says, well, the firewall is doing exactly what it’s supposed to be doing. who’s right?

We’re all good for now, but we state this is a kludge for today and a follow-up meeting needs to occur.

So what happened?

I think the single most important thing is that the firewall guy switched his problem hypothesis from Must be asymmetric routing, to Maybe it’s a badly behaved application. Meaning what? What if you have an application that establishes a TCP connection, and then to beat idle timeouts, sends a KEEP ALIVE packet every minute? Well, now, suppose your firewall is rebooted in the middle of that because it has changed management stations and needs to reload policy? What might the situation look like to it?

It you were unlucky, it just might see these KEEP ALIVE TCP packets without having the connection in its connection table, in other words, exactly the situation we are observing!

What should have happened?

It would have been great if the communication were forced to be re-established form time-to-time, even once a day. This problem had been going on for days.

But, given this very stupid behaviour on the part of this application, if the app people had been aware they should have forced their application to re-establish the TCP connection after the firewall reboot. Probably, for the one that did work, it had been forced to re-establish.

A firewall person has to be sufficiently aware to realize this could be happening, and advise the app owner on what to do to prevent it.

Conclusion

So whose problem is it?

To the app people it looks like a firewall issue, cut-and-dried. To a firewall guy it looks like an application issue, cut-and-dried. I see both sides. It is some of both. An app owner has to understand enough about firewalls to see that this type of thing can occur. Assigning blame to one side or the other, as most people are wont to do, is not productive. Only a team effort could have revealed this issue. And recall that the “fix” is actually a kludge that lowers security.

Case: almost closed.

References and related

Checkpoint’s note on TCP packet out of state first packet isn’t SYN: https://community.checkpoint.com/t5/General-Topics/TCP-packet-out-of-state-First-packet-isn-t-SYN-tcp-flags-SYN-ACK/td-p/37166

The IT Detective agency cases are still coming fast and furious. Here’s another recent case. Failed to convert character