Intro

This is a small piece of a larger project – displaying your photos on Google Drive using a Raspberry Pi. That project will require completion of many small investigations, this being just one of them.

I thought, wouldn’t it be cool to ask your photo frame when and where a certain picture was taken? I thought that information was typically embedded into the picture by modern smartphones. Turns out this is disappointingly not the case – at least not on our smartphones, except in a small minority of pictures. But since I got somewhere with my investigation, I wanted to share the results, regardless.

Also, I naively assumed that there surely is a web service that permits one to easily convert GPS coordinates into the name – in text – of the closest town. After all, you can enter GPS coordinates into Google Maps and get back a map showing the exact location. Why shouldn’t it be just as easy to extract the nearest town name as text? Again, this assumption turns out to be faulty. But, I found a way to do it that is not toooo difficult.

Example for Cape May, New Jersey

$ curl -s http://api.geonames.org/address?lat=38.9302957777778&lng=-74.9183310833333&username=drjohns

<geonames>

<address>

<street>Beach Dr</street>

<houseNumber>690</houseNumber>

<locality>Cape May</locality>

<postalcode>08204</postalcode>

<lng>-74.91835</lng>

<lat>38.93054</lat>

<adminCode1>NJ</adminCode1>

<adminName1>New Jersey</adminName1>

<adminCode2>009</adminCode2>

<adminName2>Cape May</adminName2>

<adminCode3/>

<adminCode4/>

<countryCode>US</countryCode>

<distance>0.03</distance>

</address>

</geonames>

The above example used the address service. The results in this case are unusually complete. Sometime the lookups simply fail for no obvious reason, or provide incomplete information, such as a missing locality. In those cases the town name is usually still reported in the adminName2 element. I haven’t checked the address accuracy much, but it seems pretty accurate, like, representing an actual address within 100 yards, usually better, of where the picture was taken.

They have another service, findNearbyPlaceName, which sometimes works even when address fails. However its results are also unpredictable. I was in Merrillville, Indiana and it gave the toponym as Chapel Manor, which is the name of the subdivision! In Virginia it gave the name The Hamlet – still not sure where that came from, but I trust it is some hyper-local name for a section of the town (James City). Just as often it does spit back the town or city name, for instance, Atlantic City. So, it’s better than nothing.

The example for Nantucket

From a browser – here I use curl in the linux command line – you enter:

$ curl -s http://api.geonames.org/findNearbyPlaceName?lat=41.282778&lng=-70.099444&username=drjohns

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<geonames>

<geoname>

<toponymName>Nantucket</toponymName>

<name>Nantucket</name>

<lat>41.28346</lat>

<lng>-70.09946</lng>

<geonameId>4944903</geonameId>

<countryCode>US</countryCode>

<countryName>United States</countryName>

<fcl>P</fcl>

<fcode>PPLA2</fcode>

<distance>0.07534</distance>

</geoname>

</geonames>

So what did we do? For this example I looked up Nantucket in Wikipedia to find its GPS coordinates. Then I used the geonames api to convert those coordinates into the town name, Nantucket.

Note that drjohns is an actual registered username with geonames. I am counting on the unpopularity of my posts to prevent an onslaught of usage as the usage credits are limited for free accounts. If I understood the terms, a few lookups per hour would not be an issue.

I’m finding the PlaceName lookup pretty useless, the address lookup fails about 30% of the time, so I’m thinking as a backstop to use this sort of lookup:

$ curl ‘http://api.geonames.org/extendedFindNearby?lat=41.00050&lng=-74.65329&username=drjohn’

<?xml version=”1.0″ encoding=”UTF-8″ standalone=”no”?>

<geonames>

<address>

<street>Stanhope Rd</street>

<mtfcc>S1400</mtfcc>

<streetNumber>439</streetNumber>

<lat>41.00072</lat>

<lng>-74.6554</lng>

<distance>0.18</distance>

<postalcode>07871</postalcode>

<placename>Lake Mohawk</placename>

<adminCode2>037</adminCode2>

<adminName2>Sussex</adminName2>

<adminCode1>NJ</adminCode1>

<adminName1>New Jersey</adminName1>

<countryCode>US</countryCode>

</address>

</geonames>

Note that gets a reasonably close address, and more importantly, a zipcode. The placename is too local and I will probably discard it. But another lookup can turn a zipcode into a town or city name which is what I am after.

$ curl ‘http://api.geonames.org/postalCodeSearch?country=US&postalcode=07871&username=drjohns’

<?xml version=”1.0″ encoding=”UTF-8″ standalone=”no”?>

<geonames>

<totalResultsCount>1</totalResultsCount>

<code>

<postalcode>07871</postalcode>

<name>Sparta</name>

<countryCode>US</countryCode>

<lat>41.0277</lat>

<lng>-74.6407</lng>

<adminCode1 ISO3166-2=”NJ”>NJ</adminCode1>

<adminName1>New Jersey</adminName1>

<adminCode2>037</adminCode2>

<adminName2>Sussex</adminName2>

<adminCode3/>

<adminName3/>

</code>

</geonames>

See? It was a lot of work, but we finally got the township name, Sparta, returned to us.

Ocean GPS?

I was whale-watching and took some pictures with GPS info. Trying to apply the methods above worked, but just barely. Basically all I could get out of the extended find nearby search was a name field with value North Atlantic Ocean! Well, that makes it sounds like I was on some Titanic-style ocean crossing. In fact I was in the Gulf of Maine a few miles from Provincetown. So they really could have done a better job there… Of course it’s understandable to not have a postalcode and street address and such. But still, bodies of waters have names and geographical boundaries as well. Casinos seem to be the main sponsors of geonames.org, and I guess they don’t care. Yesterday my script came up with a location Earth! But now I see geonames proposed several locations and I only look at the first one. I am creating a refinement which will perform better in such cases. Stay tuned… And…yes…the refinement is done. I had to do a wee bit of xml parsing, which I now do.

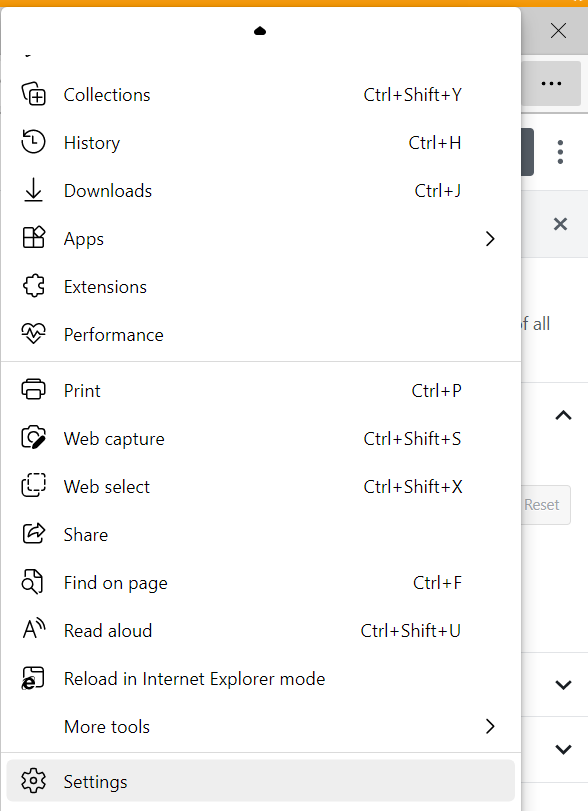

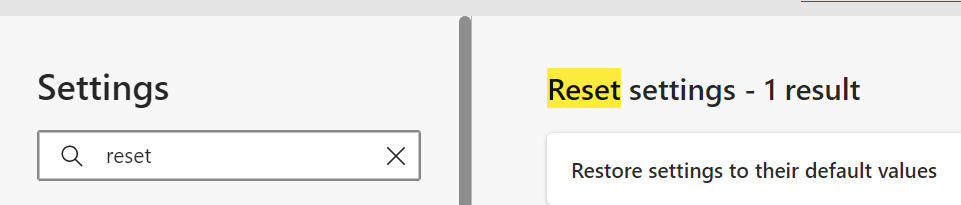

To get your own account at geonames.org

The process of getting your own account isn’t too difficult, just a bit squirrelly. For the record, here is what you do.

Go to http://www.geonames.org/login to create your account. It sends an email confirmation. Oh. Be sure to use a unique browser-generated password for this one. The security level is off-the-charts awful – just assume that any and all hackers who want that password are going to get it. It sends you a confirmation email. so far so good. But when you then try to use it in an api call it will tell you that that username isn’t known. This is the tricky part.

So go to https://www.geonames.org/manageaccount . It will say:

Free Web Services

the account is not yet enabled to use the free web services. Click here to enable.

And that link, in turn is https://www.geonames.org/enablefreewebservice . And having enabled your account for the api web service, the URL, where you’ve put your username in place of drjohns, ought to work!

For a complete overview of all the different things you can find out from the GPS coordinates from geonames, look at this link: https://www.geonames.org/export/ws-overview.html

Working with pictures

Please look at this post for the python code to extract the metadata from an image, including, if available GPS info. I called the python program getinfo.py.

Here’s an actual example of running it to learn the GPS info:

$ ../getinfo.py 20170520_102248.jpg|grep -ai gps

GPSInfo = {0: b'\x02\x02\x00\x00', 1: 'N', 2: (42.0, 2.0, 18.6838), 3: 'W', 4: (70.0, 4.0, 27.5448), 5: b'\x00', 6: 0.0, 7: (14.0, 22.0, 25.0), 29: '2017:05:20'}

I don’t know if it’s good or bad, but the GPS coordinates seem to be encoded in the degrees, minutes, seconds format.

A nice little program to put things together

I call it analyzeGPS.pl and a, using it on a Raspberry Pi, but could easily be adapted to any linux system.

#!/usr/bin/perl

# use in combination with this post https://drjohnstechtalk.com/blog/2020/12/convert-gps-coordinates-into-town-name/

use POSIX;

$DEBUG = 1;

$HOME = "/home/pi";

#$file = "Pictures/20180422_134220.jpg";

while(<>){

$GPS = $date = 0;

$gpsinfo = "";

$file = $_;

open(ANAL,"$HOME/getinfo.py \"$file\"|") || die "Cannot open file: $file!!\n";

#open(ANAL,"cat \"$file\"|") || die "Cannot open file: $file!!\n";

print STDERR "filename: $file\n" if $DEBUG;

while(<ANAL>){

$postalcode = $town = $name = "";

if (/GPS/i) {

print STDERR "GPS: $_" if $DEBUG;

# GPSInfo = {1: 'N', 2: (39.0, 21.0, 22.5226), 3: 'W', 4: (74.0, 25.0, 40.0267), 5: 1.7, 6: 0.0, 7: (23.0, 4.0, 14.0), 29: '2016:07:22'}

($pole,$deg,$min,$sec,$hemi,$lngdeg,$lngmin,$lngsec) = /1: '([NS])', 2: \(([\d\.]+), ([\d\.]+), ([\d\.]+)...3: '([EW])', 4: \(([\d\.]+), ([\d\.]+), ([\d\.]+)\)/i;

print STDERR "$pole,$deg,$min,$sec,$hemi,$lngdeg,$lngmin,$lngsec\n" if $DEBUG;

$lat = $deg + $min/60.0 + $sec/3600.0;

$lat = -$lat if $pole eq "S";

$lng = $lngdeg + $lngmin/60.0 + $lngsec/3600.0;

$lng = -$lng if $hemi = "W" || $hemi eq "w";

print STDERR "lat,lng: $lat, $lng\n" if $DEBUG;

#$placename = `curl -s "$url"|grep -i toponym`;

next if $lat == 0 && $lng == 0;

# the address API is the most precise

$url = "http://api.geonames.org/address?lat=$lat\&lng=$lng\&username=drjohns";

print STDERR "Url: $url\n" if $DEBUG;

$results = `curl -s "$url"|egrep -i 'street|house|locality|postal|adminName'`;

print STDERR "results: $results\n" if $DEBUG;

($street) = $results =~ /street>(.+)</;

($houseNumber) = $results =~ /houseNumber>(.+)</;

($postalcode) = $results =~ /postalcode>(.+)</;

($state) = $results =~ /adminName1>(.+)</;

($town) = $results =~ /locality>(.+)</;

print STDERR "street, houseNumber, postalcode, state, town: $street, $houseNumber, $postalcode, $state, $town\n" if $DEBUG;

# I think locality is pretty good name. If it exists, don't go further

$postalcode = "" if $town;

if (!$postalcode && !$town){

# we are here if we didn't get interesting results from address reverse loookup, which often happens.

$url = "http://api.geonames.org/extendedFindNearby?lat=$lat\&lng=$lng\&username=drjohns";

print STDERR "Address didn't work out. Trying extendedFindNearby instead. Url: $url\n" if $DEBUG;

$results = `curl -s "$url"`;

# parse results - there may be several objects returned

$topelemnt = $results =~ /<geoname>/i ? "geoname" : "geonames";

@elmnts = ("street","streetnumber","lat","lng","locality","postalcode","countrycode","countryname","name","adminName2","adminName1");

$cnt = xml1levelparse($results,$topelemnt,@elmnts);

@lati = @{ $xmlhash{lat}};

@long = @{ $xmlhash{lng}};

# find the closest entry

$distmax = 1E7;

for($i=0;$i<$cnt;$i++){

$dist = ($lat - $lati[$i])**2 + ($lng - $long[$i])**2;

print STDERR "dist,lati,long: $dist, $lati[$i], $long[$i]\n" if $DEBUG;

if ($dist < $distmax) {

print STDERR "dist < distmax condition. i is: $i\n";

$isave = $i;

}

}

$street = @{ $xmlhash{street}}[$isave];

$houseNumber = @{ $xmlhash{streetnumber}}[$isave];

$admn2 = @{ $xmlhash{adminName2}}[$isave];

$postalcode = @{ $xmlhash{postalcode}}[$isave];

$name = @{ $xmlhash{name}}[$isave];

$countrycode = @{ $xmlhash{countrycode}}[$isave];

$countryname = @{ $xmlhash{countryname}}[$isave];

$state = @{ $xmlhash{adminName1}}[$isave];

print STDERR "street, houseNumber, postalcode, state, admn2, name: $street, $houseNumber, $postalcode, $state, $admn2, $name\n" if $DEBUG;

if ($countrycode ne "US"){

$state .= " $countryname";

}

$state .= " (approximate)";

}

# turn zipcode into town name with this call

if ($postalcode) {

print STDERR "postalcode $postalcode exists, let's convert to a town name\n";

print STDERR "url: $url\n";

$url = "http://api.geonames.org/postalCodeSearch?country=US\&postalcode=$postalcode\&username=drjohns";

$results = `curl -s "$url"|egrep -i 'name|locality|adminName'`;

($town) = $results =~ /<name>(.+)</i;

print STDERR "results,town: $results,$town\n";

}

if (!$town) {

# no town name, use adminname2 which is who knows what in general

print STDERR "Stil no town name. Use adminName2 as next best thing\n";

$town = $admn2;

}

if (!$town) {

# we could be in the ocean! I saw that once, and name was North Atlantic Ocean

print STDERR "Still no town. Try to use name: $name as last resort\n";

$town = $name;

}

$gpsinfo = "$houseNumber $street $town, $state" if $locality || $town;

} # end of GPS info exists condition

} # end loop over ANAL file

$gpsinfo = $gpsinfo || "No info found";

print qq(Location: $gpsinfo

);

} # end loop over STDIN

#####################

# function to parse some xml and fill a hash of arrays

sub xml1levelparse{

# build an array of hashes

$string = shift;

# strip out newline chars

$string =~ s/\n//g;

$parentelement = shift;

@elements = @_;

$i=0;

while($string =~ /<$parentelement>/i){

$i++;

($childelements) = $string =~ /<$parentelement>(.+?)<\/$parentelement>/i;

print STDERR "childelements: $childelements" if $DEBUG;

$string =~ s/<$parentelement>(.+?)<\/$parentelement>//i;

print STDERR "string: $string\n" if $DEBUG;

foreach $element (@elements){

print STDERR "element: $element\n" if $DEBUG;

($value) = $childelements =~ /<$element>([^<]+)<\/$element>/i;

print STDERR "value: $value\n" if $DEBUG;

push @{ $xmlhash{$element} }, $value;

}

} # end of loop over parent elements

return $i;

} # end sub xml1levelparse

Here’s a real example of calling it, one of the more difficult cases:

$ echo -n 20180127_212203.jpg|./analyzeGPS.pl

GPS: GPSInfo = {0: b'\x02\x02\x00\x00', 1: 'N', 2: (41.0, 0.0, 2.75), 3: 'W', 4: (74.0, 39.0, 12.0934), 5: b'\x00', 6: 0.0, 7: (2.0, 21.0, 58.0), 29: '2018:01:28'}

N,41.0,0.0,2.75,W,74.0,39.0,12.0934

lat,lng: 41.0007638888889, -74.6533592777778

Url: http://api.geonames.org/address?lat=41.0007638888889&lng=-74.6533592777778&username=drjohns

results:

street, houseNumber, postalcode, state, town: , , , ,

Address didn't work out. Trying extendedFindNearby instead. Url: http://api.geonames.org/extendedFindNearby?lat=41.0007638888889&lng=-74.6533592777778&username=drjohns

childelements: <address> <street>Stanhope Rd</street> <mtfcc>S1400</mtfcc> <streetNumber>433</streetNumber> <lat>41.00121</lat> <lng>-74.65528</lng> <distance>0.17</distance> <postalcode>07871</postalcode> <placename>Lake Mohawk</placename> <adminCode2>037</adminCode2> <adminName2>Sussex</adminName2> <adminCode1>NJ</adminCode1> <adminName1>New Jersey</adminName1> <countryCode>US</countryCode> </address>string: <?xml version="1.0" encoding="UTF-8" standalone="no"?>

element: street

value: Stanhope Rd

element: streetnumber

value: 433

element: lat

value: 41.00121

element: lng

value: -74.65528

element: locality

value:

element: postalcode

value: 07871

element: countrycode

value: US

element: countryname

value:

element: name

value:

element: adminName2

value: Sussex

element: adminName1

value: New Jersey

dist,lati,long: 3.88818897839883e-06, 41.00121, -74.65528

dist < distmax condition. i is: 0

street, houseNumber, postalcode, state, admn2, name: Stanhope Rd, 433, 07871, New Jersey, Sussex,

postalcode 07871 exists, let's convert to a town name

url: http://api.geonames.org/extendedFindNearby?lat=41.0007638888889&lng=-74.6533592777778&username=drjohns

results,town: <geonames>

<name>Sparta</name>

<adminName1>New Jersey</adminName1>

<adminName2>Sussex</adminName2>

<adminName3/>

</geonames>

,Sparta

Location: 433 Stanhope Rd Sparta, New Jersey (approximate)

Or, if you just want the interesting stuff,

$ echo -n 20180127_212203.jpg|./analyzeGPS.pl 2>/dev/null

Location: 433 Stanhope Rd Sparta, New Jersey (approximate)

Bonus section

Convert city to GPS coordinates with geonames

Having the city and country you can use the wikipedia search to turn that into serviceable GPS coordinates. This is sort of the opposite problem from what we did earlier. Several possible matches are returned so you need some discretion to ferret out the correct answer. And sometimes smaller towns are just not found at all and only wild guesses are returned! The curl + URL that I’ve been using for this is:

curl ‘http://api.geonames.org/wikipediaSearchJSON?q=Cornwall,Canada&maxRows=10&username=drjohns’

I think if you know the state (or province) you can put that in as well.

Convert GPS coordinates into a GMT offset

The following is partial python code which I have come across and haven’t yet myslef verified. But I am excited to learn of it because until now I only knew how to do this with the Geonames api which I will not be showing because it’s slow, potentially costs money, etc.

from datetime import datetime

from pytz import timezone, utc

from timezonefinder import TimezoneFinder

tf = TimezoneFinder() # reuse

def get_offset(*, lat, lng):

"""

returns a location's time zone offset from UTC in minutes.

"""

today = datetime.now()

tz_target = timezone(tf.timezone_at(lng=lng, lat=lat))

# ATTENTION: tz_target could be None! handle error case

today_target = tz_target.localize(today)

today_utc = utc.localize(today)

return (today_utc - today_target).total_seconds()

bergamo = {"lat": 45.69, "lng": 9.67}

minute_offset = get_offset(**bergamo)

print('seconds offset',minute_offset)

parsippany = {"lat": 40.86, "lng": -74.43}

minute_offset = get_offset(**parsippany)

print('seconds offset',minute_offset)

A word about China

Today I tried to see if I could learn the province or county a particular GPS coordinate is in when it is in China, but it did not seem to work. I’m guessing China cities cannot be looked up in the way I’ve shown for my working examples, but I cannot be 100% sure without more research which I do not plan to do.

Conclusion

An api for reverse lookup of GPS coordinates which returns the nearest address, including town name, is available. I have provided examples of how to use it. It is unreliable, however, and Geonames.org does provide alternatives which have their own drawbacks. In my image gallery, only a minority of my pictures have encoded GPS data, but it is fun to work with them to pluck out the town where they were shot.

I have incorporated this functionality into a Raspberry Pi-based photo frame I am working on.

I have created an example Perl program that analyzes a JPEG image to extract the GPS information and turn it into an address that is remarkably accurate. It is amazing and uncanny to see it at work. It deals with the screwy and inconsistent results returned by the free service, Geonames.org.

References and related

There are lots of different things you can derive given the GPS coordinates using the Geonames api. Here is a list: https://www.geonames.org/export/ws-overview.html

In this photo frame version of mine, I extract all the EXIF metadata which includes the GPS info.

One day my advanced photo frame will hopefully include an option to learn where a photo was taken by interacting with a remote control. Here is the start of that write-up.

You can pay $5 and get a zip codes to cities database in any format. I’m sure they’ve just re-packaged data from elsewhere, but it might be worth it: https://www.uszipcodeslist.com/

For a more professional api, https://smartystreets.com/ looks quite nice. Free level is 250 queries per month, so not too many. But their documentation and usability looks good to me. For this post I was looking for free services and have tried to avoid commercial services.